Launched in November 2022, ChatGPT has for sure made various daily tasks easier, especially in the workplace. People use it write emails, reports, presentations, business plans drafts, and even articles (not this one, though!).

However, have you ever wondered what is the environmental impact of ChatGPT performing a simple task?

In fact, the hidden environmental costs and impact of AI models is often overlooked. ICT studies have shown how the AI sector contributes to 2-4% of global greenhouse gasses emissions produced by the entire tech industry.

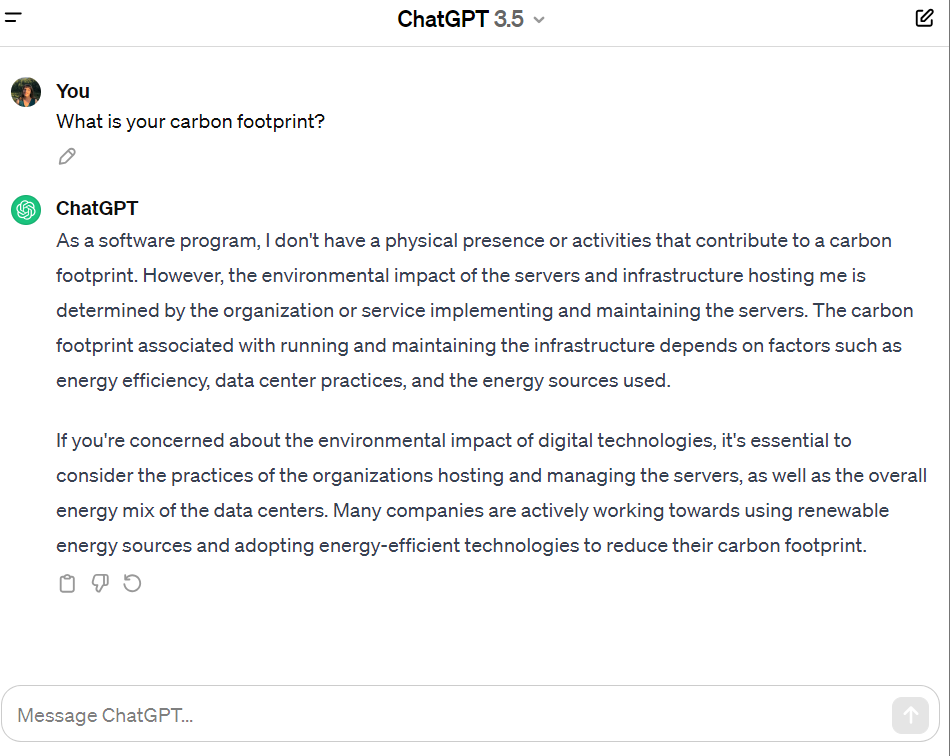

When it comes to ChatGPT it is indeed really difficult to assess its life long carbon footprint. Various authors have tried to confront it with other AI based systems and even if we ask the machine itself, the answer is quite nebulous and does not refer to its training phase (which is the most energy consuming):

Researchers have, indeed, argued that training a “single large language deep learning model” such as OpenAI’s GPT-4 is estimated to use around 300 tons of CO2 . For comparison, the average person is responsible for creating around 5 tons of CO2 a year.

As pointed out by ChatGPT itself in its answer, a large amount of energy is used by host servers and infrastructure, which depends on factors such as energy efficiency, data center practices, and the energy source adopted (that, “spoiler alert”, is not always a renewable one).

The data center industry, which refers to a physical facility designed to store and manage information and communications technology systems, is indeed responsible for 2–3% of global greenhouse gas (GHG) emissions.

And the volume of data across the world doubles in size every two years.

The data center servers that store this ever-expanding sea of information require huge amounts of energy and water (directly for cooling, and indirectly for generating non-renewable electricity) to operate computer servers, equipment, and cooling systems.

By pointing that out, I am not denying that AI technologies are an important asset in today’s society, and they could help in advancing research in various fields. On the other hand, there’s not that much transparency when it comes to high tech/AI companies stating their emissions and, for this reason, all encompassing studies are lacking.

Given all that, there are movements to make AI modeling, deployment, and usage more environmentally sustainable. Its goal is to replace power-hungry approaches with more suitable and environmentally-conscious replacements.

Change is needed from both vendors and users to make AI algorithms green so that their utility can be widely deployed without harm to the environment.

Generative models (such as Chat GPT) in particular, given their high energy consumption, need to become greener before they become more pervasive and researchers from Harvard have listed some proposals in this sense:

- Use existing large generative models, don’t generate your own.

- Fine-tune train existing models.

- Use energy-conserving computational methods.

- Use a large model only when it offers significant value.

- Be discerning about when you use generative AI.

- Evaluate the energy sources of your cloud provider or data center.

- Re-use models and resources.

- Include AI activity in your carbon monitoring.

Of course, there are many considerations involved with the use of generative AI models by organizations and individuals: ethical, legal, and even philosophical and psychological. Ecological concerns, however, are worthy of being added to the mix.

In fact, we can debate the long-term future implications of these technologies for humanity, but such considerations will be useless if we won’t have a habitable planet to debate them on.